If you’ve ever pasted a piece of writing into Originality.ai, GPTZero, or Turnitin and watched it spit back “87% AI-generated,” you’ve probably had the same question I hear every week from the teams I consult:

How does the tool actually know?

And the follow-up question, usually asked more quietly:

Can I trust it?

I’ve spent the last few years training companies to use AI responsibly. As a result, I’ve also spent a lot of time on the other side of the equation: testing the tools that claim to detect it. This guide is my attempt to explain what’s actually happening inside an AI content detector, in language that doesn’t require a computer science degree.

No hype. No marketing copy. Just an honest explanation of the machinery — including why these tools sometimes get it badly wrong.

The short answer

AI content detectors don’t “read” your text the way a human does. Instead, they look at statistical patterns in word choice and sentence structure. Then they compare those patterns to what they’ve learned about human writing versus AI-generated writing.

Essentially, they’re asking: “Does this look like the kind of writing I’ve seen come out of large language models, or does it look like the kind of writing humans produce when they’re a bit messy, inconsistent, and unpredictable?”

That’s the whole idea in one sentence. However, the rest of this guide unpacks what that means in practice — and why that approach has real limits.

Why this is harder than it sounds

Before we get into the mechanics, it helps to understand the core problem these tools are trying to solve.

The cat-and-mouse problem

Modern AI writing tools — ChatGPT, Claude, Gemini, and the rest — were trained on enormous amounts of human writing. Billions of web pages, books, articles, and forum posts. Their entire job is to produce text that sounds like a human wrote it. As a result, they’re very good at it.

So when an AI detector tries to tell human writing from AI writing, it’s not looking for obvious “tells” like bad grammar or robotic phrasing. Those problems barely exist anymore. Instead, it’s looking for subtle statistical fingerprints — patterns so small that a human reader would never notice them.

A useful analogy

Think of it like this: imagine two musicians playing the same song. A casual listener can’t tell them apart. However, a sound engineer with the right software can look at the waveform and spot which one is the human performer and which is a very good AI synthesizer. That’s because real human performance has tiny inconsistencies, while AI output has a slightly too-smooth quality.

In short, AI detectors are doing something similar, but with words instead of sound waves.

The two measurements almost every detector uses

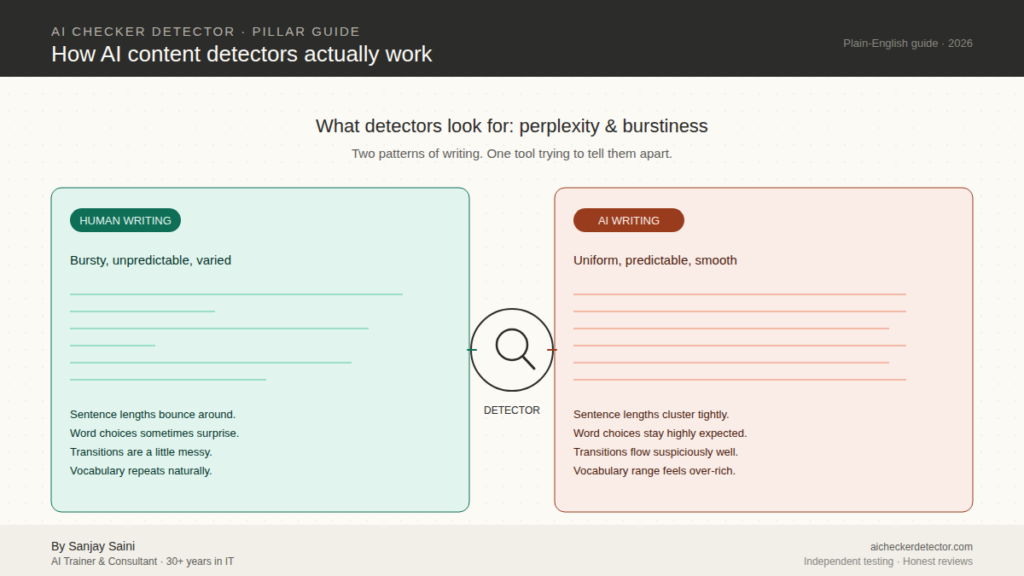

If you only remember two terms from this guide, remember these: perplexity and burstiness. Nearly every major AI detector — GPTZero, Originality.ai, Winston AI, Copyleaks, and the rest — is built around some version of these two ideas.

Perplexity: how surprised the model is

Perplexity measures how predictable a piece of text is to a language model.

Here’s the intuition. Imagine you’re reading a sentence word by word, and after each word, you have to guess the next one. Some sentences are very easy to predict:

“The sun rose in the ___”

You guessed “east.” So did everyone else. So would an AI model. This sentence has low perplexity — the words are exactly what you’d expect.

Now try this one:

“The sun rose in the toaster.”

That word doesn’t fit. You’re surprised by it. The sentence has high perplexity — the words aren’t what the model expected.

Here’s the key insight: AI-generated text tends to have low perplexity overall. That’s because large language models are literally designed to pick the most probable next word at each step. Essentially, they’re very good at producing text that “flows naturally” — which is another way of saying they pick words that are statistically expected.

Humans, on the other hand, are weirder. We pick unusual words. Sometimes we change direction mid-sentence. Occasionally, we use a word nobody would predict because it just felt right. As a result, human writing has bumpier, less predictable perplexity.

So when a detector looks at your text and calculates that the word choices are suspiciously predictable, it starts leaning toward an “AI-generated” verdict.

Burstiness: how varied your sentences are

Burstiness measures variation — particularly how much the length and complexity of your sentences bounce around across a piece of writing.

Human writers are bursty. For instance, we write a long, winding sentence that rolls through several ideas, and then we stop. Short punch. Then we might write a medium-length sentence to set up the next paragraph, followed by another long one that explores a tangent.

AI tends not to do this. Without specific prompting, most large language models produce writing with more uniform sentence length — a steady stream of medium-length, medium-complexity sentences. Competent. Readable. But weirdly flat if you measure it statistically.

Consequently, when an AI detector sees text where sentence lengths cluster tightly around an average, and sentence complexity stays oddly consistent, that’s another strike toward “AI-generated.”

Putting them together

A piece of writing that has low perplexity (too predictable) and low burstiness (too uniform) is the classic signature of AI output. That’s what most detectors are looking for at the most basic level.

But — and this is critical — this approach catches certain kinds of writing that happen to share those features even when no AI was involved. We’ll come back to that.

What else detectors look for

Perplexity and burstiness are the foundation. However, modern detectors layer additional signals on top. The bigger commercial tools typically examine:

Word-choice fingerprints. Large language models have favorite words. For example, they over-use certain connectors (“moreover,” “furthermore,” “in essence”), certain intensifiers (“truly,” “remarkable,” “pivotal”), and certain sentence openers. As a result, some detectors keep running catalogs of words that appear disproportionately in AI output and flag text that leans heavily on them.

Sentence-structure patterns. Meanwhile, AI tends to produce a particular rhythm. Think three-part lists (“not only X, but also Y, and importantly Z”), balanced clauses, and a fondness for certain grammatical constructions. Detectors look for these patterns clustering together.

Vocabulary range. In addition, human writers usually have a narrower working vocabulary within a single piece than AI does. When I’m writing for my consulting clients, for instance, I tend to reuse the same twenty or thirty domain-specific terms. AI, by contrast, draws on its enormous training set and often sprinkles in synonyms and variations that a real human author wouldn’t reach for.

Topic-transition smoothness. Similarly, humans transition between ideas a bit awkwardly. We leave gaps, make sudden jumps, or repeat ourselves. AI, on the other hand, produces very smooth, almost suspiciously well-organized flow between topics.

Model-specific fingerprints. Finally, some detectors — particularly newer ones like Pangram Labs and more recent versions of Originality.ai — are trained to recognize the specific “accent” of individual language models. They can often tell not just whether text is AI-generated but which model produced it (GPT-5 versus Claude versus Gemini). These fingerprints come from training the detector on large samples of output from each specific model.

How the actual machinery works

Now let’s walk through what happens when you paste text into a detector and click “check.” Here’s roughly what goes on under the hood:

Step 1: Tokenization. Your text is broken into tokens — small chunks that are usually a word, a part of a word, or a punctuation mark. “Unbelievable” might become three tokens: “un,” “believ,” and “able.”

Step 2: Scoring each token. For every token in your text, a language model calculates: “How likely was this exact token to appear next, given everything that came before?” This generates a probability score for each token.

Step 3: Looking at the distribution. The detector looks at the overall pattern of those probability scores. Are they clustered tightly in the “highly expected” zone? That’s an AI signal. Are they spread out, with plenty of surprising choices? That’s a human signal.

Step 4: Running additional checks. Modern detectors layer in the pattern-recognition signals I listed above — vocabulary, structure, transitions, model-specific fingerprints.

Step 5: Producing a verdict. The detector combines all these signals using its trained classifier and outputs a score. Usually this is expressed as a percentage (e.g., “78% AI-generated”) or a category (Human / AI / Mixed).

The details vary from tool to tool. Some detectors use a single large model to do all of this at once. Others chain several smaller models together. Some — like GPTZero — are transparent about breaking their analysis down sentence-by-sentence so teachers and editors can see exactly which parts of a submission were flagged.

Why detectors get it wrong

Here is the part most “best AI detector” articles skip over, because it’s uncomfortable for the tools they’re trying to sell you. AI detectors are wrong a measurable amount of the time. Moreover, the ways they’re wrong are not random. Instead, they follow patterns that matter for anyone relying on them.

False positives — flagging humans as AI

A false positive is when the tool says “this is AI-generated” but it was written by a human. Unfortunately, these are the cases that ruin careers and cause academic accusations.

The groups most likely to be falsely flagged:

- Non-native English speakers. If you learned English as a second language and tend to write in a slightly more formal, careful register, detectors often flag your work as AI. For example, one study from Stanford found detectors flagged essays by non-native English writers at dramatically higher rates than native speakers, even when both were fully human-written.

- Highly structured technical writers. Engineers, lawyers, and scientists often write in ways that naturally have low burstiness and low perplexity because their fields reward clarity and consistency. As a result, detectors penalize this style.

- Students writing to a rubric. Similarly, academic writing that follows a strict structure — “introduction, three body paragraphs, conclusion” — looks statistically similar to AI output.

- Writers who use grammar-checking tools. If you run your writing through Grammarly, QuillBot’s paraphraser, or similar tools, you’ve smoothed out exactly the human irregularities that detectors rely on to clear you.

This isn’t a hypothetical problem. Vanderbilt University publicly disabled Turnitin’s AI detection in 2023 after finding the false-positive rate in real-world use was unacceptably high. Likewise, Curtin University in Australia made a similar decision in 2026. When major research universities are turning these tools off, that tells you something.

False negatives — missing actual AI

On the flip side, a false negative is when the tool says “this looks human” but it was actually written by AI.

The most common ways detectors miss AI content:

- Text that’s been lightly edited. Take AI output and have a human change a few words in each paragraph. As a result, many detectors drop from 95% accuracy to under 60% with this single step.

- Text run through a humanizer tool. Products like Undetectable AI, Walter Writes, and Phrasly exist specifically to make AI writing unfingerprint-able. Specifically, they deliberately inject the burstiness and perplexity detectors look for.

- Output from less common models. In addition, most detectors are trained primarily on GPT-family output. Therefore, text from smaller, more obscure models sometimes slips through.

- Very short text. Finally, anything under about 300 words doesn’t give detectors enough statistical signal. GPTZero and others openly acknowledge that their accuracy drops significantly on short passages.

How accurate are they, really?

You’ll see claims of “99.98% accuracy” on tool marketing pages. However, take every such number with enormous skepticism. That’s because the accuracy a tool reports is usually measured on the tool maker’s own test set, using the kinds of AI text and human text they chose to include.

Based on the independent testing I’ve done and the peer-reviewed research I’ve reviewed, here’s a more honest picture of the current (April 2026) landscape:

- On clearly AI-generated text from major models, the best detectors (GPTZero, Originality.ai, Pangram Labs, Copyleaks) catch between 85% and 99% of samples. The best tools cluster near the top of that range.

- On human-edited AI text, however, accuracy drops to roughly 50–75% even for the best tools.

- On text that’s been through a humanizer, most detectors fail outright. In fact, the “humanizer versus detector” arms race is currently being won by the humanizers.

- False positive rates on genuinely human text vary wildly. For example, GPTZero claims under 1% for submissions over 300 words, while independent testing sometimes finds higher rates, especially for non-native English writers and highly structured professional writing.

The practical takeaway: no AI detector is accurate enough to justify a life-altering decision — firing a writer, failing a student, rejecting a candidate — based on its output alone. These are signals, not verdicts.

What a responsible workflow looks like

After all of this, you might be wondering if AI detectors are worth using at all. My answer is: yes, but with discipline. Here’s the workflow I recommend to clients:

Treat the detector score as one data point, not the verdict. If a detector flags something as AI-generated, that should trigger a conversation or a closer look — not a punishment.

Run the text through more than one tool. No single detector is reliable enough on its own. If three detectors agree, that’s a much stronger signal than one screaming “95% AI.”

Look at sentence-level breakdowns where available. Tools like GPTZero and Copyleaks show you which sentences are suspicious. If the flagged sections all cluster in one paragraph that clearly differs in style from the rest, that’s more meaningful than a whole-document percentage.

Consider context. Is this a technical writer producing standards-compliant prose? A non-native English speaker? A student following a rigid rubric? All of these push detector scores up for perfectly innocent reasons.

Ask. Before you accuse. In every real workplace or academic situation I’ve seen mishandled, the damage was done when someone took a detector score as proof and acted on it without talking to the writer. Always talk first.

The road ahead

The cat-and-mouse game between AI writers and AI detectors isn’t going to end anytime soon. Every new generation of language models makes detection harder. In response, each generation of humanizers narrows the gap further. Meanwhile, detection tools are incorporating new signals — semantic coherence analysis, writing-style fingerprints that persist across documents, and even cross-referencing with known AI training data.

For the foreseeable future, the honest position is this: AI detection is a useful signal, not a truth oracle. The tools will keep getting better, and they’ll keep getting fooled. Ultimately, the organizations that handle this well will treat detector output as the start of a conversation — and the ones that handle it badly will make painful mistakes they can’t easily undo.

If you want to go deeper into how specific tools compare and which one fits your use case, our tool reviews and head-to-head comparisons are the best next step. If you’re dealing with a specific situation — a false accusation, a content-verification workflow for a team, a policy decision for your institution — feel free to get in touch.

Frequently Asked Questions

Q1 – Can AI detectors be 100% accurate?

No. Every current detector has a measurable false-positive rate and false-negative rate. Any tool claiming 100% accuracy is making a marketing claim, not a scientific one.

Q2 – Can Turnitin detect ChatGPT?

Turnitin’s AI detection catches a significant portion of straightforward ChatGPT output, but it struggles with edited AI text and has a documented false-positive problem serious enough that several major universities have turned it off.

Q3 – Why did an AI detector flag my human writing?

The most common reasons are: you’re a non-native English speaker, you write in a highly structured professional style, your piece is shorter than about 300 words, or you ran it through a grammar/paraphrasing tool that smoothed out your natural inconsistencies.

Q4 – What’s the most accurate AI detector in 2026?

It depends on what you’re detecting. For catching unedited AI in long-form content, GPTZero, Originality.ai, and Pangram Labs lead in independent testing. For educational settings where false positives matter most, GPTZero’s low false-positive rate makes it the safer choice. Our full comparison guide covers this in depth.

Q5 – Can humanizer tools really beat AI detectors?

In 2026, yes — at least temporarily. Tools like Undetectable AI, Walter Writes, and Phrasly can often push AI-generated text past most detectors. Detectors are responding by training on humanizer output, but the arms race is currently favoring the humanizers.

Q6 – Should I trust a single detector’s verdict?

No. Run your text through at least two or three detectors before drawing any conclusions, and treat the results as a signal that invites investigation — not as proof.

Have a tool you want us to test next? Spotted something in this guide you disagree with? Email me – sanjay@agilewow.com – I read every message.

About the author: Sanjay Saini has 30+ years of experience in the IT industry and currently works as an AI trainer and consultant, helping businesses adopt AI responsibly.