By Sanjay Saini, AI Trainer and Consultant, 30+ years in IT Last updated: [DATE]

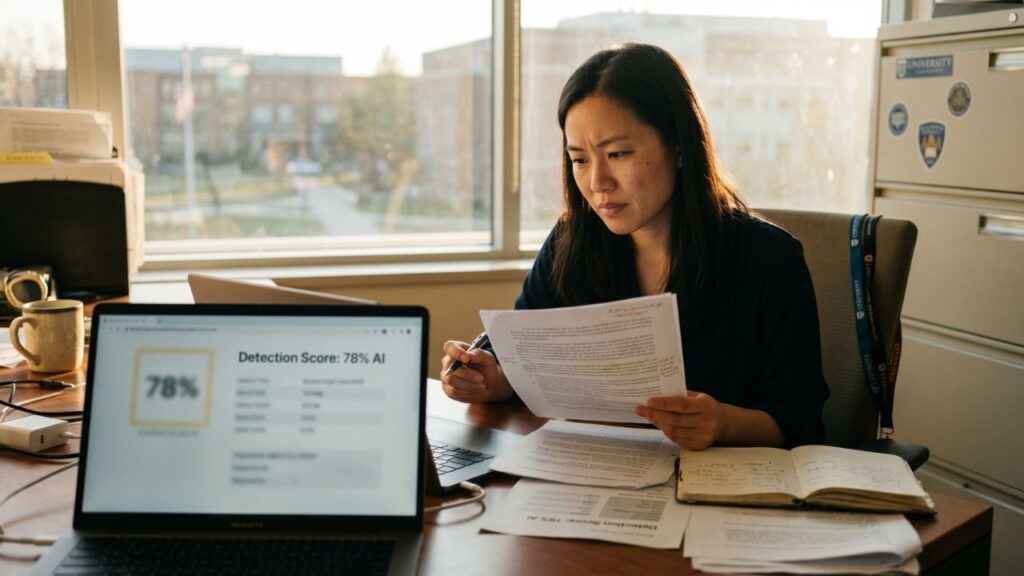

Walk onto the homepage of any major AI detector and you will see confident numbers. Winston AI says 99.98% accuracy. Originality.ai says 99%. GPTZero says 99% on its own benchmark. Turnitin implies something similar when it markets to universities.

If those numbers were true, this would be a very short article. But they are not, and the gap between what the tool makers claim and what independent testing actually finds has widened dramatically in 2026.

I have spent the last couple of years training businesses and teams on how to use AI responsibly, which means I also spend a lot of time looking at the detection side of the equation. Clients ask me constantly whether they can trust a particular detector score. The honest answer is complicated, and it deserves more than a marketing number.

This guide pulls together independent benchmarks, peer-reviewed studies, and real testing data from the last twelve months to answer one question properly. How accurate are AI detectors in 2026, and when should you actually trust them?

The short answer

AI detectors are reasonably good at catching raw, unedited output from older AI models. They are significantly worse at everything else people actually do with AI in 2026. Accuracy on realistic mixed content sits between 60% and 80% for the best tools. False positive rates range from about 1% to nearly 30% depending on the tool, the text, and critically, who wrote it.

No detector currently on the market is accurate enough to justify a life-changing decision based on its score alone. That is not my opinion. It is what the data says.

The claimed versus measured accuracy gap

Let us start with the cleanest way to see the problem, which is lining up vendor claims next to independent test results.

Winston AI markets a 99.98% accuracy figure. Originality.ai markets 99%. GPTZero markets 99% on its own Chicago Booth benchmark. A 2026 Scribbr study that tested Originality.ai on varied content found overall accuracy of 76%. A PCWorld test of GPTZero produced an accuracy figure of 62%. An independent review by Humanize AI Pro, which tested 500 documents in February 2026, found GPTZero’s real accuracy sat at 88% overall, with 67.5% accuracy on the mixed human and AI content that actually describes how most people write.

That is a gap of 22 to 37 percentage points between the headline number and what independent testers measure on realistic inputs. The reason is not that any of these companies are lying. It is that they test on clean, unedited AI output generated in laboratory conditions, and they publish those numbers as if they describe real-world performance. They do not.

A Supwriter benchmark in March 2026 put it bluntly after testing eight detectors on 150 samples. Not a single tool exceeded 80% overall accuracy. The closest was Originality.ai at 79%, followed by Copyleaks and GPTZero. This is the opposite of the picture you get from any of these companies’ homepages.

Where the numbers come from

Before I show you more figures, it is worth being clear about whose data I trust and why. Independent testing of AI detectors is hard to do well. You need controlled samples, diverse text types, current AI models, and ideally multiple passes through each tool to check consistency. Most online comparisons do none of this.

The studies and benchmarks I have drawn on for this guide are:

A 2026 Stanford Human-Centered AI report on detection performance across major models

A University of Chicago study on detector behaviour on short-form content

The RAID benchmark from the University of Pennsylvania, which measures how detectors behave when you constrain their false positive rates

A February 2026 paper in the International Journal for Educational Integrity, accessible through Springer, which evaluated Originality.ai and Turnitin across 192 texts

The Weber-Wulff et al. study, which continues to be one of the most cited pieces of independent testing in this space

Independent benchmarks from Scribbr, Supwriter, Humanize AI Pro, and Fritz.ai published in early 2026

A meta-analysis Originality.ai itself commissioned, which aggregated 14 separate studies

You will notice I am citing some studies that are commissioned by detector companies themselves. I include these because even vendor-commissioned meta-analyses tend to show numbers that are far below the marketing claims, which tells you something in itself.

Accuracy on raw AI content

The one area where AI detectors genuinely perform well is on unedited output from major language models. If you copy a ChatGPT response and paste it into a detector without changing a word, the best tools will usually catch it.

On raw GPT-4 and GPT-5 output, the 2026 Stanford HAI report put top-tier detector accuracy at between 94% and 96%. Independent testing of GPTZero, Originality.ai, Copyleaks, and Turnitin in controlled conditions consistently shows detection rates above 90% on this kind of clean AI input.

So if your use case is catching somebody who has pasted raw AI output into an essay, a blog post, or a piece of journalism without any editing at all, the tools work. That is the scenario the vendor marketing numbers describe, and it is a real scenario. It is just not the scenario most of us are actually dealing with.

Where accuracy falls apart

Four things make detector accuracy drop, often dramatically. They are worth understanding individually because they compound in the real world.

Light human editing

Taking an AI-generated draft and making small changes is the single most common way people use AI in 2026. According to the Supwriter benchmark, light editing, which they define as synonym swaps and minor restructuring, dropped detection rates by 15 to 25 percentage points across every tool they tested. Heavy editing, where a writer significantly rewrites and adds original ideas, dropped detection rates by 30 to 45 percentage points.

In other words, a detector claiming 95% accuracy on raw AI text might sit at 55% to 65% on lightly edited AI text, which is barely better than a coin flip.

Humanizer tools

This one has changed the landscape in 2026. Tools like Undetectable AI, Walter Writes, Phrasly, and several others are specifically designed to rewrite AI output so it passes detection. They work by injecting the kinds of irregularities that detectors rely on as a human signal.

Axis Intelligence reported in March 2026 that after three passes through a quality humanizer, no tested detector consistently identified content as AI-generated. GPTZero’s detection rate on humanized content fell to around 18%. Originality.ai does better than most on this front, but still misses a majority of humanized content.

The humanizer versus detector arms race is currently being won by the humanizers, and this is something no detector vendor wants to discuss on their homepage.

Newer and smaller models

Most detectors were trained primarily on outputs from OpenAI’s GPT family. As models have diversified in 2026, with users shifting to Claude, Gemini, Llama, Mistral, and especially smaller faster models like GPT-5-mini, detector performance has become uneven.

Fritz.ai’s 2026 analysis found that Originality.ai catches only 31.7% of GPT-5 content and just 7.3% of GPT-5-mini output, which is the most popular OpenAI model among actual users in 2026. If the content you are trying to catch was produced by the model most people are actually using, this particular detector misses nearly everything.

Short text

Almost every detector’s accuracy collapses on short input. A University of Chicago study found that most detectors struggle significantly on content under 50 words. GPTZero, to its credit, has been publicly honest about this, stating that false positive rates rise sharply for submissions under 300 words.

The reason is statistical. Detectors need enough text to see the patterns they are looking for. A short Slack message, a two-sentence email, a 200-word discussion board post, none of these give the tool enough signal to draw a reliable conclusion.

The false positive problem

If accuracy is the question everyone asks, false positives are the question more people should be asking. A false positive is when a detector flags human writing as AI. These are the cases that ruin careers and get students wrongly accused.

The false positive rates from independent testing vary wildly by tool:

Turnitin sits at about 1% to 7% in most testing, though a Washington Post study using a smaller sample found a 50% false positive rate on certain content types

GPTZero ranges from 1% to 18%, with the higher figures appearing in tests involving non-native English speakers

Originality.ai’s false positive rate has been measured at 2% in its own commissioned study and 14% to 28% in some independent testing, depending on content

Copyleaks typically comes in around 1% to 5%

Sapling hit 28% in the Supwriter benchmark, meaning more than one in four human writers would be told their work is AI-generated

ZeroGPT sits even higher in the RAID benchmark, with false positive plateau rates reaching 16.9%

There is a pattern in this data that anyone relying on these tools needs to understand. The tools with the strictest detection, which are often the ones catching the most AI content, also tend to have the highest false positive rates. This is not a bug. It is a mathematical trade-off in how these systems work. You cannot crank up sensitivity without also cranking up the rate of wrongful flags.

Who gets wrongly flagged

The raw false positive number is only part of the story. When you look at who those false positives happen to, the picture gets worse.

A Stanford study by Liang and colleagues, originally published in 2023 and still widely cited because no later study has contradicted it meaningfully, found that AI detectors misclassified over 61% of essays written by non-native English speakers as AI-generated. On essays by native English speakers, those same detectors performed near-perfectly. The researchers identified the reason as a statistical coincidence. Non-native English writers tend to use slightly more formal grammar, more common vocabulary, and more structured sentence patterns, all of which happen to resemble the statistical fingerprint of AI output.

Neurodivergent writers face a similar issue. Writers with autism, ADHD, or dyslexia often use writing patterns like highly structured organisation, repetitive phrasing, or unusual syntax that coincidentally increase their false positive risk.

Students writing to a rigid academic rubric, technical writers producing standards-compliant prose, lawyers writing legal documents, and engineers writing documentation all produce text that detectors systematically penalise. Not because any of them used AI. Because their natural writing happens to look, statistically, a lot like what these tools were trained to flag.

This is the problem Vanderbilt University ran into when it publicly disabled Turnitin’s AI detection feature, and it is why Curtin University in Australia followed suit in 2026. When research universities with large institutional budgets decide these tools are too unreliable to use, that should register as important information.

Inconsistency is its own problem

One of the most revealing findings in the Supwriter benchmark was a test most people never think to run. They submitted every sample twice, separated by at least 24 hours, and checked whether the same tool gave the same answer.

Turnitin was the most consistent at 96% identical results. Sapling was the least consistent at 84%, which means it gives different answers on the same text roughly one time in six. If a tool cannot agree with itself, how much weight should anyone place on a single score from it?

Inconsistency typically clusters on borderline cases, where the detector’s confidence is already low. But borderline is exactly where most real-world content sits in 2026, because real-world content is rarely pure AI or pure human anymore.

The realistic accuracy picture

Pulling all of this together, here is what the honest picture of AI detector accuracy looks like in April 2026, based on the data I trust:

On raw, unedited AI text from major models, top-tier detectors catch between 90% and 96% of samples

On lightly edited AI text, accuracy falls to roughly 55% to 75%

On heavily edited or humanized text, accuracy falls further, often below 40%

On mixed content, where a human and AI both contributed, no detector in public testing exceeds about 62% accuracy

On text under 300 words, accuracy and false positive rates both get worse

For non-native English speakers, false positive rates can be two to three times higher than for native speakers

For the smaller AI models that most people actually use day to day, detection rates are often below 30%

If you are looking at a detector score and trying to decide what it means, all of these caveats need to be in the room with you.

When the tools are still worth using

I am not arguing that AI detectors are useless. I still recommend them to my consulting clients, and I still use them on my own work. The key is using them the right way.

They are useful as a first-pass signal. If you run a piece of content through two or three reputable detectors and they all flag it as likely AI, that is worth investigating. Not as proof, but as a prompt for a conversation.

They are useful for catching lazy AI use. The kind of content that gets caught easily is the kind where somebody has made zero effort to review or edit what the AI produced. In many contexts, catching that is enough.

They are useful as an educational tool. Showing a student or a junior writer what their own work scored, and explaining why, is often a better conversation than an accusation.

They are useful for self-checking. If you have written something yourself and a detector flags it, that is information about how your writing style compares statistically to AI output. Sometimes it tells you to vary your sentence rhythm. Sometimes it tells you nothing at all.

What they are not is a reliable basis for an accusation, a firing, a grade penalty, or a hiring decision.

What to actually do

If you are an educator, a content manager, a recruiter, or anybody else making real decisions based on AI detector output, here is the workflow I recommend.

Run every piece of content through at least two or three different detectors before treating the result as meaningful. Agreement between tools is more reliable than any single score.

Look at sentence-level breakdowns where the tool offers them. A whole-document percentage of 70% AI tells you less than knowing which three paragraphs look suspicious and why.

Consider context. Is this writer a non-native English speaker? A neurodivergent student? A legal writer or engineer producing highly structured prose? All of these push scores up for reasons that have nothing to do with AI.

Talk to the writer before you act. In every case I have seen mishandled in client organisations, the damage happened because somebody took a detector score as proof and acted without a conversation. Always ask first.

Review your institutional policy. If you are running an organisation that uses AI detection in any consequential way, the Vanderbilt and Curtin decisions are worth studying. Some organisations have decided the false positive risk outweighs the detection benefit, and they are not wrong to make that call.

Where this goes next

The cat and mouse game between AI writers and AI detectors is not slowing down. Each new generation of language models produces text that is harder to distinguish from human writing. Each new humanizer tool narrows the gap further. Detectors are adding new signals like semantic coherence analysis and writing-style fingerprints, but the fundamental problem is not going away.

For anyone reading this in the hope of a clean answer, I am sorry to disappoint. The accurate answer in 2026 is that these tools are useful signals, not truth oracles. The people and institutions that treat detector scores as conversation starters will keep getting value from them. The ones that treat scores as verdicts will keep making painful mistakes.

If you want to go deeper on how specific tools compare, our tool reviews cover each one in detail, and the pillar guide on how AI detectors actually work explains the underlying mechanics this accuracy picture rests on.

Frequently Asked Questions

How accurate are AI detectors in 2026?

On raw, unedited AI text, the best detectors reach 90% to 96% accuracy. On the lightly edited or mixed content that describes most real use in 2026, accuracy falls to between 55% and 80% depending on the tool. On humanized AI content, most detectors fall below 40% accuracy.

Which AI detector is the most accurate?

Based on independent testing, Turnitin has the lowest false positive rate but also the lowest raw detection rate. GPTZero has a strong reputation for balance. Originality.ai scores highest on aggressive detection but has higher false positive rates. No single tool is clearly best, and the right choice depends on whether missing AI or wrongly flagging humans would be more costly in your specific use case.

Why do AI detectors flag human writing as AI?

Detectors look for patterns like predictable word choice and uniform sentence length. Non-native English speakers, technical writers, students following strict rubrics, and neurodivergent writers all naturally produce text that happens to share these statistical features, which is why they are flagged at much higher rates than other groups.

Can AI detectors be fooled?

Yes, easily. Light editing drops detection rates by 15 to 25 percentage points. Humanizer tools can push AI content past most detectors after a few passes. Using smaller or less common AI models, or text under 300 words, both significantly reduce detector accuracy.

Should schools and universities use AI detectors?

Several major universities including Vanderbilt and Curtin have publicly disabled AI detection tools after finding the false positive rate unacceptable in real-world use. Other institutions continue to use them as one input among several. The answer depends on how the score is used. As a conversation starter, it can be useful. As grounds for penalty, the error rate is currently too high.

Is GPTZero accurate?

Independent testing puts GPTZero’s accuracy between 62% and 88% depending on content type, which is significantly below the 99% the company claims on its homepage. It performs well on raw AI content and has a relatively low false positive rate compared to some competitors, but it struggles with edited content, humanized content, and short text.

Have a testing result that contradicts what is in this guide? A tool you want me to run through the same analysis? [Email me](mailto:[YOUR EMAIL]). I read every message and update this guide when the data shifts.

About the author: Sanjay Saini has 30+ years of experience in the IT industry and works as an AI trainer and consultant, helping businesses adopt AI responsibly. This guide is based on independent benchmarks, peer-reviewed research, and hands-on testing conducted through AI Checker Detector’s testing methodology.